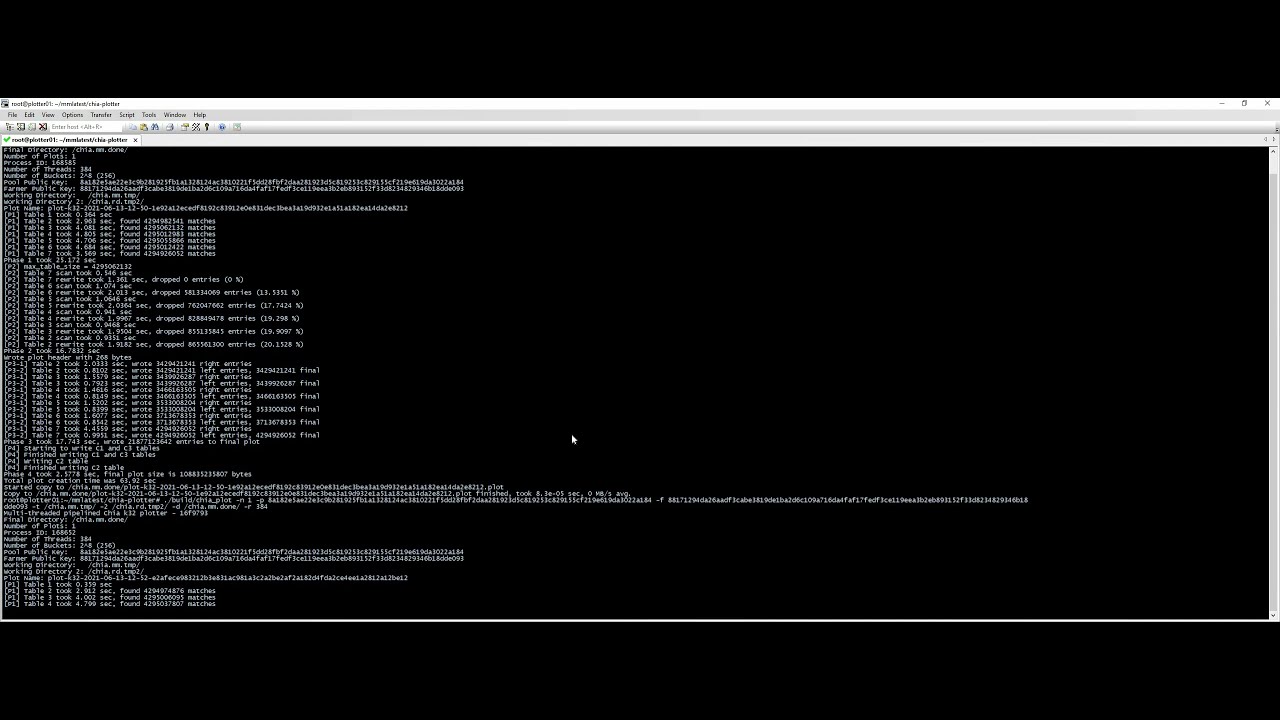

So, we are now at 1 min K32 plots, and while this is a large cluster, it is still not ASICs.

That is done on a machine with 192 CPU cores. To match those cores, probably at least 1K RAM, everything (disks, etc…) enterprise level.

The next thing to do is to gather that much cash to buy such a machine.

just wow, i think chia plotting getting exited

So you are saying a Threadripper 3990x with 512GB RAM, for <5000$ would take at least 3 minutes to stream out a K32 plot? I do wonder what the Threadripper 5xxx series will do …

If it is worth it, an ASIC will be designed to just make that ‘supercomputer’ look like plotting on a phone. Look what happened to BTC mining, look what is happening to ETH. Once ASICs get involved, it is game over for the general purpose crowd.

So it’s one minute, assuming you are writing the plot out to RAM?

anybody else here super excited that they used a super computer for a Chia plot

to me that is real acknowledgement of Chia…

as discussed in other posts on the forum… it’s not actually creating the plot that always passing filter yet… it shows speed, but you need to analyze the Challenge before you can build the correct plot… this was building a random plot. K32 is not broken…

I was more thinking streaming it out to the network.

What I’m as yet unclear of is this. Plots as I understand it are basically a pre-computed cache, a lookup table, for a function that would be too slow to compute given a response window.

But just how slow are we talking about?

Chia announcement, AMA around fast plotting June 15… see keybase#announcement

An example of how I think really fast plotting changes potential business models, or pundits thinking about buying a really fast machine they have no other use for for that matter.

Let’s say I rent an on demand m6g.metal at Amazon (2.5$/hour for 100% utilization). This has 64 Cores, 256 GB memory and a 25Gbit network connection. I add 100 TB S3 storage with 1.5 PB/month egress. This sets me back around 5.000$ a month in rentals, and let say I could produce about 14.400 plots a month (one spit out every 3 minutes). My pricing model can be ‘free’ plotting, and you pay for renting a storage locker on my service (0.3 $ per started 24 Hrs) and an access fee (0.5 $ per download)

I could be looking at margins of over 200% with such an operation. I’m sure you could do it much cheaper with some reservations etc. , but with all on demand my risk would be fairly limited as I have no commitments and can scale up or down fluently following demand.

Let’s face it. Plotting is a prime example of a peak computing usage far from the average problem. You want boatloads of compute when you are filling your harddrives, and 0 once they are full, with the occasional addition or replacement drive fililng. This is what cloud compute was made for. It’s just that with the inefficiency of the software before, it wasn’t very economical.

Less if you leased Spot Instances - right now at $0.6705 per Hour (US East, Ohio)

Being pre-empted is not the end of the world for jobs that only take a few minutes - it is easy to arrange backups to soak up any peak load

Spot instances wont work as it is pulled when the capacity is not available and all your plotting will be go down, unless you are making a plot REALLY fast!

ALSO would chia plotting work in the ARM core?

The plotter is open source, all you need is to compile it. So yeah, I do not see why it wouldn’t work

So that means an ARM compiled instance is needed OR we can run it over docker as well!

I don’t see what you mean. If you use Linux you are compiling it anyway when you run install.sh. No need for docker here

I think the hint here is that we are getting so fast that we are encroaching on the “real time” plotting region - where we might be able to make a plot that contains what is being requested without any pre-calculation

I realize it is not as simple as “how fast can i generate 1 plot”

For this purpose a Spot Instance is fine as you get to target each challenge every 18 seconds or so - it doesn’t matter if you miss one or two, or ten - as long as you are quick enough to pick off the odd challenge, that is all you need

Depending on whether the task is fairly partitionable, it might be possible to link a few of these instances together with fast networking and low latency comms (1-10Gig eth can exchange messages in < 1 us and sustain < 5us for 99.9% of messages)

Max is “already” working on “shift onto GPU”.

As of now, if your best GPU becomes unprofitible on eth, you might soon be able to just run it non-stop to find chia proofs.

Keep in mind that many projects have modified their mining algorithms to eliminate GPU usage. While I am not familiar with the intricacies of Chia, this could also be a possibility if GPU usage becomes problematic. Definition of problematic is key here.

Not saying it’s impossible to GPU optimise parts of the algorithm, but would need to find a way to seriously reduce the memory requirement before that is possible for the whole algorithm, 128GB VRAM is not a thing (yet).

With GPUs, will drive/ram write speeds will be the eventual bottleneck?