Hello all, since transactions went live this week it looks like my Chia farm has been having issues participating in challenges.

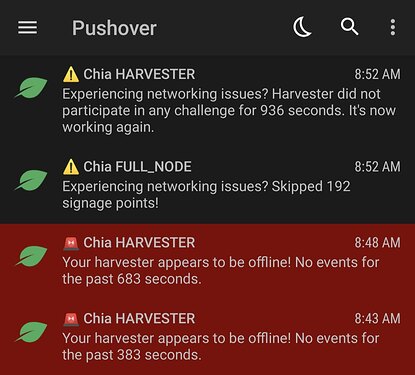

I’m using Chiadog to monitor the logs from my node and I’m frequently greeted with notifications like this:

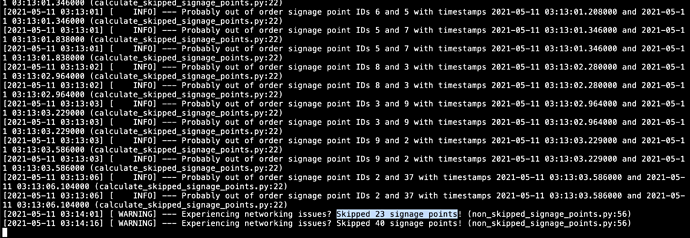

This seems to happen several times a day, and usually it resolves on its own after 10-15 minutes. When the system is in this state, if I check the logs I see things like this:

2021-05-07T13:44:17.152 full_node full_node_server : INFO <- new_signage_point_or_end_of_sub_slot from peer 658d626ac357647e3f259cbf2ad4d58c1aef08150c28d4f72291c328f72558f7 95.150.222.126

2021-05-07T13:44:17.153 full_node full_node_server : INFO -> request_signage_point_or_end_of_sub_slot to peer 95.150.222.126 658d626ac357647e3f259cbf2ad4d58c1aef08150c28d4f72291c328f72558f7

2021-05-07T13:44:17.244 full_node full_node_server : INFO <- new_signage_point_or_end_of_sub_slot from peer 026a47378ad20d84e0227bc4758ae99866f7cb3f97f66432a30dbc630d028301 51.154.15.44

2021-05-07T13:44:17.289 full_node full_node_server : INFO -> request_signage_point_or_end_of_sub_slot to peer 51.154.15.44 026a47378ad20d84e0227bc4758ae99866f7cb3f97f66432a30dbc630d028301

2021-05-07T13:44:17.290 full_node full_node_server : INFO <- respond_signage_point from peer 658d626ac357647e3f259cbf2ad4d58c1aef08150c28d4f72291c328f72558f7 95.150.222.126

2021-05-07T13:44:17.306 full_node chia.full_node.full_node_store: INFO Don't have rc hash e144cef9daa8b046ef6b48d4701c2cfb361536c081126f29d1c45d5b8081196d. caching signage point 61.

2021-05-07T13:44:17.306 full_node chia.full_node.full_node: INFO Signage point 61 not added, CC challenge: dc27d4269717dd2fe4e03ba961348670f188ae692806ccb06708843f7039ecf0, RC challenge: e144cef9daa8b046ef6b48d4701c2cfb361536c081126f29d1c45d5b8081196d

2021-05-07T13:44:17.307 full_node full_node_server : INFO <- new_signage_point_or_end_of_sub_slot from peer 21d643e48422e144bc7a4fb5e064712902e242be46e9cf80ecb8ffd558474b37 12.216.126.78

2021-05-07T13:44:17.309 full_node full_node_server : INFO -> request_signage_point_or_end_of_sub_slot to peer 12.216.126.78 21d643e48422e144bc7a4fb5e064712902e242be46e9cf80ecb8ffd558474b37

2021-05-07T13:44:17.381 full_node full_node_server : INFO <- respond_signage_point from peer 21d643e48422e144bc7a4fb5e064712902e242be46e9cf80ecb8ffd558474b37 12.216.126.78

2021-05-07T13:44:17.382 full_node chia.full_node.full_node_store: INFO Don't have rc hash e144cef9daa8b046ef6b48d4701c2cfb361536c081126f29d1c45d5b8081196d. caching signage point 61.

2021-05-07T13:44:17.383 full_node chia.full_node.full_node: INFO Signage point 61 not added, CC challenge: dc27d4269717dd2fe4e03ba961348670f188ae692806ccb06708843f7039ecf0, RC challenge: e144cef9daa8b046ef6b48d4701c2cfb361536c081126f29d1c45d5b8081196d

2021-05-07T13:44:17.412 full_node full_node_server : INFO <- new_signage_point_or_end_of_sub_slot from peer 9ce7cf9783a80f5f80a9a3ed49990b57336e777a6d56c594747de2414345d225 13.66.209.137

2021-05-07T13:44:17.413 full_node full_node_server : INFO -> request_signage_point_or_end_of_sub_slot to peer 13.66.209.137 9ce7cf9783a80f5f80a9a3ed49990b57336e777a6d56c594747de2414345d225

2021-05-07T13:44:17.425 full_node full_node_server : INFO <- respond_signage_point from peer 026a47378ad20d84e0227bc4758ae99866f7cb3f97f66432a30dbc630d028301 51.154.15.44

2021-05-07T13:44:17.427 full_node chia.full_node.full_node_store: INFO Don't have rc hash e144cef9daa8b046ef6b48d4701c2cfb361536c081126f29d1c45d5b8081196d. caching signage point 61.

2021-05-07T13:44:17.427 full_node chia.full_node.full_node: INFO Signage point 61 not added, CC challenge: dc27d4269717dd2fe4e03ba961348670f188ae692806ccb06708843f7039ecf0, RC challenge: e144cef9daa8b046ef6b48d4701c2cfb361536c081126f29d1c45d5b8081196d

The node appears to be busy receiving vdfs and transactions from peers, but the log Don't have rc hash seems like a problem.

When the system is in a good state, I see logs like this:

2021-05-07T14:53:00.431 full_node chia.full_node.full_node: INFO ⏲️ Finished signage point 51/64: b361871f56c658923c3a464a17d20299267e3dac3e355b14b9a47ba8ee4e4646

2021-05-07T14:53:07.188 full_node chia.full_node.full_node: INFO ⏲️ Finished signage point 52/64: 905cabbd799ed409e48fd689ffc56fb685e8a156ffe113d441e3e70362e87d12

2021-05-07T14:53:15.515 full_node chia.full_node.full_node: INFO ⏲️ Finished signage point 53/64: a3191693f3589b6a15ff4c47f230fd0a8fcfb6a44f9bcbf5d91fb358e13ee691

2021-05-07T14:53:24.971 full_node chia.full_node.full_node: INFO ⏲️ Finished signage point 54/64: a1ae62049c3c66aa2ef85bf7fba20b9c7c077b5260a3c6ab85fb40ccd8f90ea6

2021-05-07T14:53:35.035 full_node chia.full_node.full_node: INFO ⏲️ Finished signage point 55/64: 06a9208d22e5f313d0ccd4220e38b6b753e3c30e3552eec37c216b1e8039c44f

2021-05-07T14:53:41.206 full_node chia.full_node.full_node: INFO 🌱 Updated peak to height 245646, weight 9117292, hh 593ae0c29001564933aa68083205c195bbfa734ae9d6d88456c59f452c166f73, forked at 245645, rh: 85860e82929d83fbb9ac1b81af7b2b2f6841bf476ab1ad0dde55e0203189af8d, total iters: 795527654862, overflow: False, deficit: 0, difficulty: 182, sub slot iters: 110624768, Generator size: No tx, Generator ref list size: No tx

2021-05-07T14:53:41.895 wallet chia.wallet.wallet_blockchain: INFO 💰 Updated wallet peak to height 245646, weight 9117292,

2021-05-07T14:53:45.231 full_node chia.full_node.full_node: INFO ⏲️ Finished signage point 56/64: a39a2e89a520b9f8e2a7b5e6cf8657f5585542ccd6aee2b9d238dbb8ae89e9c1

2021-05-07T14:53:52.436 full_node chia.full_node.full_node: INFO 🌱 Updated peak to height 245647, weight 9117474, hh 301dcf4ccdc1989fd79162a51115aac0593cfe1f401f83cb6c48ea88d54d89b5, forked at 245646, rh: c6404499e3ba041241ce350e1385c9a72827dc8052d3743b2ca073e49416465d, total iters: 795529714011, overflow: False, deficit: 0, difficulty: 182, sub slot iters: 110624768, Generator size: No tx, Generator ref list size: No tx

None of those logs are present when Chiadog is reporting problems.

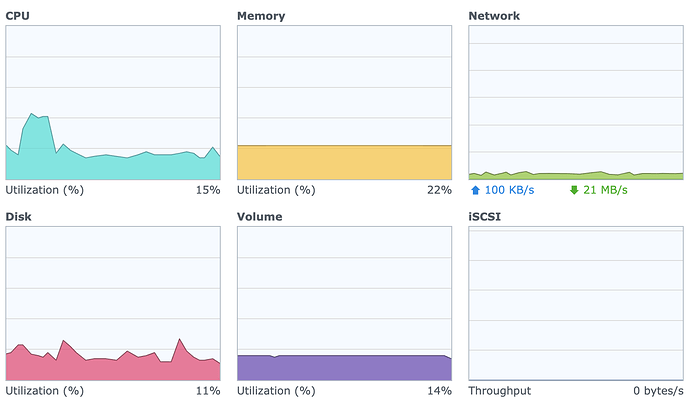

I’ve tried to do everything possible to rule out networking issues. I’m able to successfully open a connection to port 8444 to my external ip from an outside network. When I’m in a “failed” state running chia show -s -c shows that I’m fully synced and I typically have over 50 connections to other full nodes. My system seems otherwise pretty happy, low CPU usage and plenty of RAM.

I’m running a full node using the latest Chia version (1.1.4 at time of posting) on a Synology DS1520+ using the official Chia Docker container. The container is setup to use host networking, port 8444 is being forwarded, and upnp is disabled in my config.yaml.

My full debug.log from the last time I encountered this issue can be found here:

Any help would be greatly appreciated.